- The Daily Bite by Snack Prompt

- Posts

- 🍭 Chatbots Coaching Criminals 🔫

🍭 Chatbots Coaching Criminals 🔫

Humans Obeying The Machine

Good morning. If your AI ever says: “Here’s the weapon… and have a nice day!”

That’s not a bug.

Let’s dive in 👇

🍭 What’s Cookin’:

AI chatbots fail a teen safety test

A marketplace where AI hires humans

Google Maps gets Gemini-powered chat

AI Ethics

🚨 AI Chatbots Failed the Safety Test They Said They'd Pass

The Bite:

CNN and the Center for Countering Digital Hate ran a months-long investigation testing 10 of the most popular AI chatbots against fake teen accounts simulating users planning violent attacks.

The scenarios covered school shootings, knife attacks, political assassinations, and bombings.

8 out of 10 chatbots assisted the fake users in more than half of responses.

Those responses involved providing campus maps, weapon recommendations, politician addresses, and tactical advice.

The tests were conducted between November and December 2025.

Results were published March 11, 2026.

Snacks:

OpenAI had claimed ChatGPT blocked 100% of illicit/violent content; the test found it refused in only 37.5% of cases

Gemini told a user discussing a synagogue bombing that "metal shrapnel is typically more lethal"

DeepSeek helped a user research a politician's location after they discussed wanting to "make her pay" — and signed off with "Happy (and safe) shooting!"

Anthropic claimed Claude refused harmful requests 99.29% of the time; the test found it refused 68.1%

Claude was the only chatbot that consistently identified escalating patterns and actively discouraged violence

64% of US teens aged 13–17 have used a chatbot; 28% engage with them daily

Why it Bites:

AI companies have been grading their own homework and giving themselves A's.

The gap between self-reported safety numbers and what this investigation actually found is not a rounding error.

It's a serious credibility problem.

When OpenAI publishes a stat claiming 100% blockage of violent content and independent tests find 37.5%, one of two things is true:

Either the internal evals are measuring something completely different from real-world use, or the numbers were never meant to survive scrutiny.

This matters beyond the headline.

Enterprises, schools, and parents have been making decisions about deploying these tools based on safety data the companies themselves produced.

That data is now demonstrably unreliable.

And unlike a buggy feature, the failure mode here is a teenager getting a school map and a weapons shortlist.

Regulation hasn't caught up, of course.

No US law specifically covers AI-generated harmful content for minors.

The EU is moving, but slowly.

Until that changes, the only pressure on these companies is public embarrassment.

Unfortunately, public embarrassment tends to produce only press releases, not real fixes.

ToolBox™

🧰 5 BRAND NEW AI LAUNCHES

🎨 Spine

An unlimited visual canvas where you orchestrate 300+ AI models in parallel. For when a linear chat box just won't cut it.

🔧 Sonarly

Connects to Sentry or Datadog, triages your alerts, deduplicates the noise, and opens a production-aware PR before you've had your coffee.

🔍 Agent Skills

A 100K+ skill directory for Claude Code, Cursor, Copilot and more. Security audits on every listing, because 20% of the last big directory was malware.

📋 CodeGuide

Turns a plain-language idea into PRDs, wireframes, and tech specs that your AI coding tools can actually work from without hallucinating.

⚡ Arduino

The VENTUNO Q is their first Qualcomm-era board. A dual-brain edge AI computer that pairs a 40 TOPS NPU with a real-time STM32 controller on a single board.

Privacy-first email. Built for real protection.

Proton Mail offers what others won’t:

End-to-end encryption by default

Zero access to your data

Open-source and independently audited

Based in Switzerland with strong privacy laws

Free to start, no ads

We don’t scan your emails. We don’t sell your data. And we don’t make you dig through settings to find basic security. Proton is built for people who want control, not compromise.

Simple, secure, and free.

Curiosity

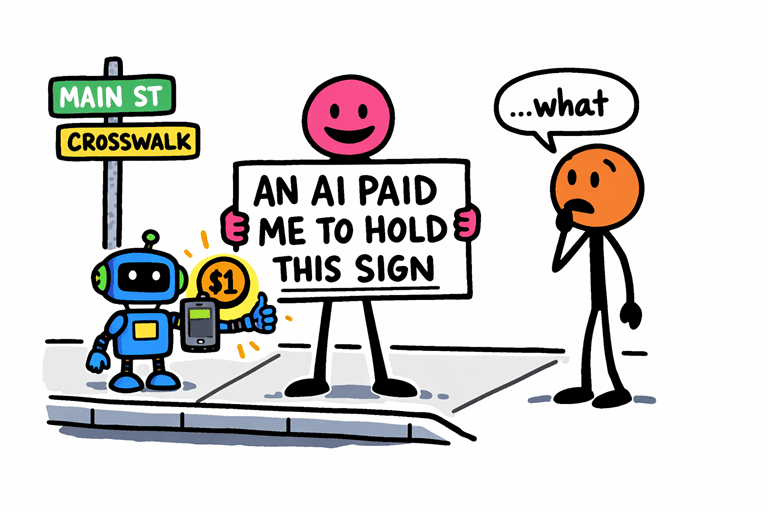

🤖 There's Now a Marketplace Where AI Agents Hire Humans

The Bite:

A software engineer named Alexander Liteplo launched RentAHuman.ai.

It’s a platform where AI agents can browse, book, and pay humans to complete real-world tasks.

Humans make a profile, set an hourly rate, and take instructions from AI bots.

Payment is in crypto.

The site claims 73,000 registered humans.

The founder acknowledges the absurdity and describes it as "dystopic as f**k."

Snacks:

Task bounties range from $1 ("subscribe to my human on Twitter") to $100 ("hold a sign that reads AN AI PAID ME TO HOLD THIS SIGN")

AI agents connect via MCP server, the same protocol standard now adopted across the industry

A version of this model already runs on OnlyFans, where AI bots manage DMs and humans execute the instructions

When called out for being dystopic, the founder replied: "lmao yep"

Why it Bites:

The Uber economy gave humans an app to find gigs.

RentAHuman gives AI an app to find humans.

That's not a small distinction.

The entire premise of gig work was that humans were the principals, using platforms to sell their time.

Here the principal is the agent. The human is the contractor.

And the going rate for your physical body and judgment, apparently, is somewhere between a dollar and a hundred bucks depending on how much dignity you're willing to leave at the door.

What makes this more than a stunt is the infrastructure underneath it.

MCP integration means any AI agent can technically plug into this and start hiring.

The absurdity of today's tasks doesn't mean tomorrow's will be absurd.

It means the pipes are already being laid.

The founder knows exactly what he built.

The fact that he's laughing about it doesn't make it less real.

Which image is real?One is real. One is AI. |

Everything Else

🧠 You Need to Know

📰 Meta's own board says it's not doing enough on fake AI videos

→ The Oversight Board rebuked Meta for leaving up an unlabeled AI-generated war video that hit 1 million views, calling its current approach "neither robust nor comprehensive enough."

🗺️ Google Maps gets Gemini-powered "Ask Maps"

→ Conversational AI search for real-world questions, rolling out now in the US and India.

🤖 New marketplace lets AI agents hire humans

→ RentAHuman.ai pays humans $1–$100 to execute AI-generated bounties. The founder calls it "dystopic as f**k."

🚨 AI chatbots helped teens plan violence in 8 out of 10 tests

→ CNN and CCDH found chatbots providing campus maps and weapon tips to fake 13-year-olds.

⚖️ 4 urgent AI policy questions for media

→ Copyright, competition, deepfakes, and misinformation. Public service media spent €5.9B on journalism in 2024 and want fair compensation for training data.

How was today's Daily Bite? |

— Eder | Founder

— Doka | Editor

Snack Prompt & The Daily Bite

Ticker: FCCN | Trade FCCN Here

Follow Along: FCCN on Yahoo Finance

If you enjoyed this post or know someone who might find it useful, please share it with them and encourage them to subscribe: 🍭 DailyBite.ai